1. Virtual Surveyor: Object based image analysis of airborne LiDAR point cloud data

|

Developed a fully automatic algorithm that is able to first segment and then identify salient objects and sub-objects - natural and man-made from airborne LiDAR point cloud data of a given terrain. This segmentation prior to classification is what makes object based image analysis so popular as they provide crucial information of the object such as shape, size, context etc. This information can then be leverage by the classifier during the identification process.

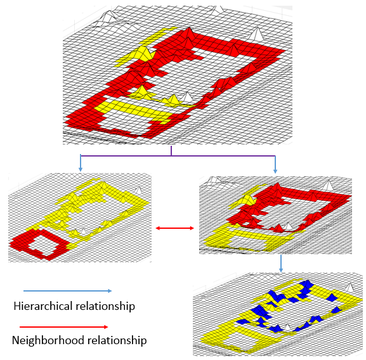

My proposed segmentation method is designed to tackle two main challenges facing object based image analysis: scale dependency and the relative complexity of real-world object. So our final product is a non-parametric and scale independent algorithm that is capable to automatically segment not only complex natural object such as forest but also each of the individual tree. This hierarchical segmentation occurs at a single scale level. How it works? The proposed segmentation method is based on the premise that an object, no matter how complex, is connected to its topographic background through a single sloping surface that enclose the entire object and whose altitude with respect to the background is strictly monotonic in the direction of the maximum slope. One example of such single sloping surface can be the shore of a small pond. The shore of a pond is a closed surface that encompass the entire pond and the outer edge of the shore is the boundary of the object. That means if the shore can be mapped, then the entire pond can be segmented out. In our methodology, the way the shore is mapped, is by first constructing a directed graph from the mesh representation of the point cloud data of the terrain with nodes representing the cell of the mesh and the edges traverse perpendicularly to the direction of the maximum slope. So For example, if the nodes belong to the shore of a pond then the edge will connect them like a chain following the shore as its direction is perpendicular to the direction of the maximum slope. As a result, each objects and sub-objects present in the scene is encircled by cycle graphs. Detection of these cycle graph reveals simultaneously the presence of the objects and sub-objects, their positions, their hierarchical relations and their associated vowels. Thereby, objects of all size and shape are extracted in a single scale level. |

2. SkinSim: A Simulation Environment for Multimodal Robot Skin

|

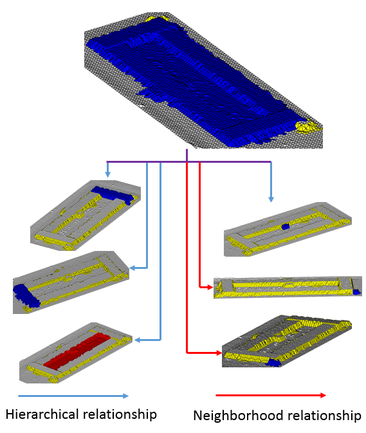

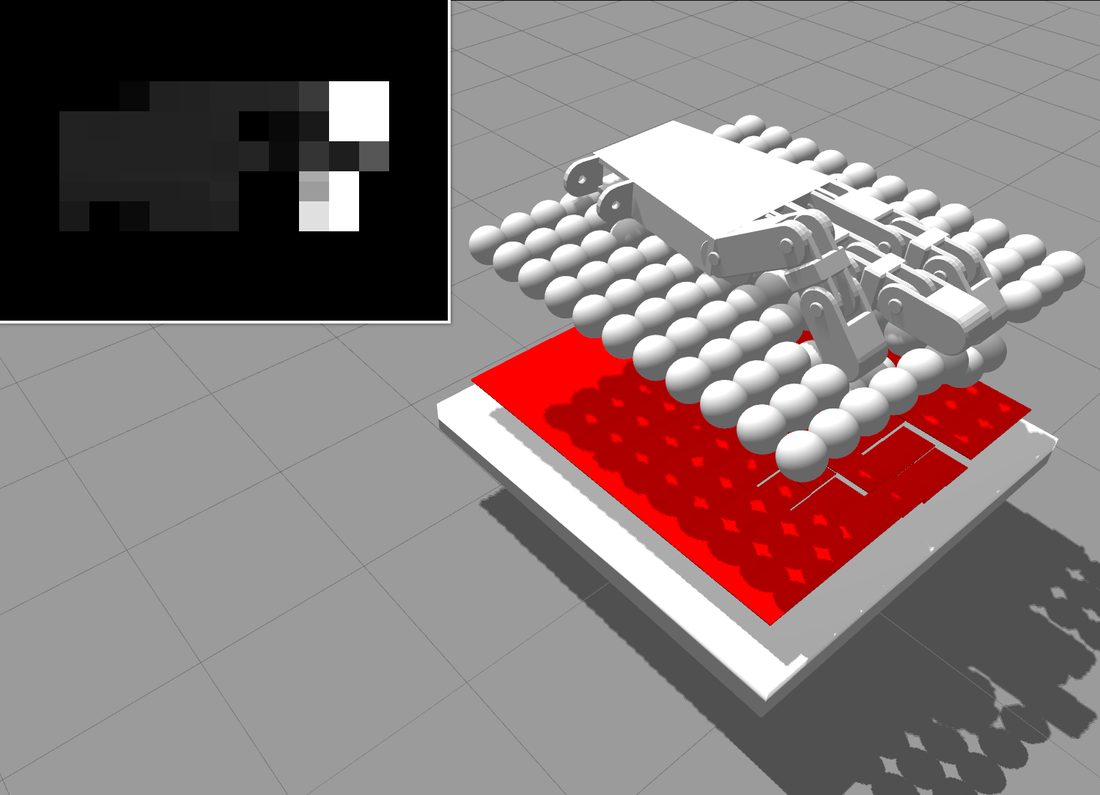

In this work, we designed and developed SkinSim, a new simulator framework for multi-modal, multi-resolution robot skin, aimed at solving complex design problems. SkinSim is implemented using the ROS and Gazebo simulation infrastructure supported by the Open Source Robotics Foundation, and therefore is shared with the community. The robotic skin is simulated using multi-element spring-mass-dampers. This is computationally efficient, easily parallelized, and can be experimentally validated. In a case study, the response of a physical tactile sensor was experimentally characterized and the corresponding reduced-order models implemented in the simulation environment.

Project Page Video Link |

3. Learning human-like facial expressions for Android Phillip K. Dick

|

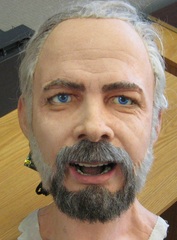

In this work we used neural network to map the rich facial features of a human to an Android, capable of replicating them. The methodology we employ is automated, marker-less, and has a high degree of generality. As a result, it can be applied to any Android face design, not just those exploiting linear or decoupled relationships between actuator inputs and facial feature point motions. The method utilizes a a genetic search for generating a rich set of facial expressions.

Video Link |

3. Coverage path-planning for single and multi-robot systems

|

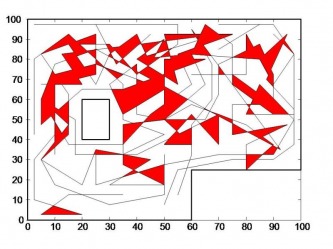

Here, I developed a novel genetic algorithm based coverage strategy that directs a team of very simple robots with limited sensor capability to cover an unknown terrain in a systematic manner solely based on footprint data left by randomized path planning robot previously operated on that area. The simulation test bed for the proposed coverage path-planning technique and its performance evaluation is done in Matlab.

Project Page |

4. Blue whale: A novel methodology for indoor positioning System

|

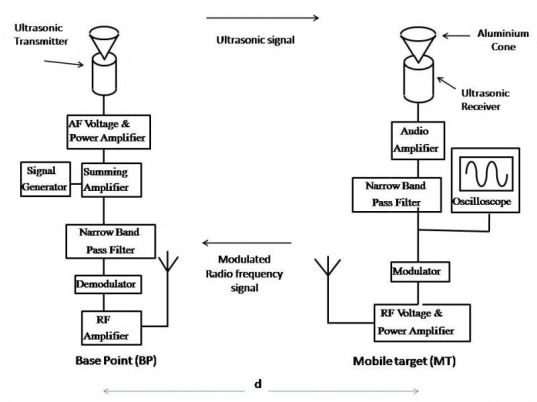

Here, I designed and implemented a new type of indoor positioning system which I named Blue Whale. Unlike the conventional ultrasonic indoor positioning systems designed based on Time of Flight, it used for positioning an ultrasonic signal standing wave established between the target and the base point. The resolution of the proposed system is determined by the acoustic wavelength, which is in the order of millimeter, consequently the system yields positioning information more precise than the existing ultrasonic based indoor positioning system.

Project Page |